Terafab is Musk’s effort to build a tighter compute manufacturing stack for Tesla, xAI, and SpaceX.

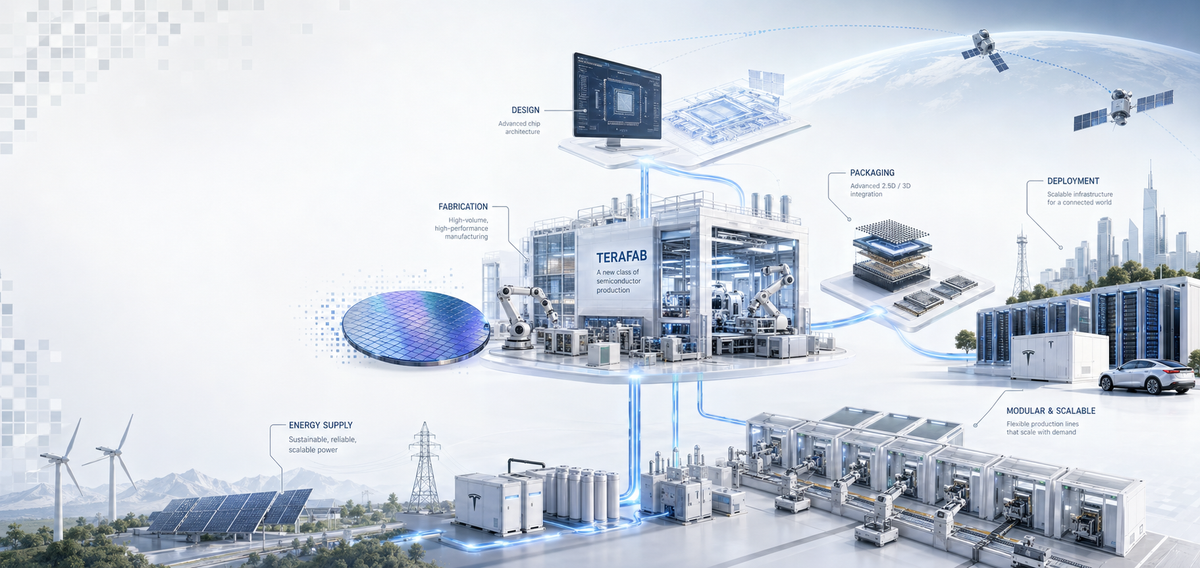

Elon Musk’s Terafab project is best understood as a proposal to reorganize the physical production of AI compute around a much tighter relationship between design, fabrication, packaging, deployment, and energy supply. The public discussion around the project has often reduced it to a single image: another ambitious Musk factory. That framing is too narrow. Terafab appears instead as a manufacturing architecture meant to support the next stage of Tesla, xAI, and SpaceX simultaneously. It links semiconductor production to robotics, autonomy, launch capability, and the possibility of orbit-based compute infrastructure. The project’s core proposition is that the present chip supply chain, while sophisticated, is too slow and too segmented for the scale and cadence of compute Musk believes his companies will require.

Recent reporting suggests that this proposition is now beginning to translate into early industrial activity. Musk has outlined an initial “advanced technology fab” in Austin, expected to cost approximately $3 billion and designed primarily as a research facility capable of producing a few thousand wafers per month. The stated purpose is to test new approaches to chip design, fabrication, and integration within a tightly controlled environment. In parallel, teams have begun coordinating directly with semiconductor equipment suppliers, in some cases offering premium pricing to accelerate access to constrained tools. This suggests that Terafab is no longer only a systems argument, but an attempt to reorganize real industrial inputs around speed and integration.

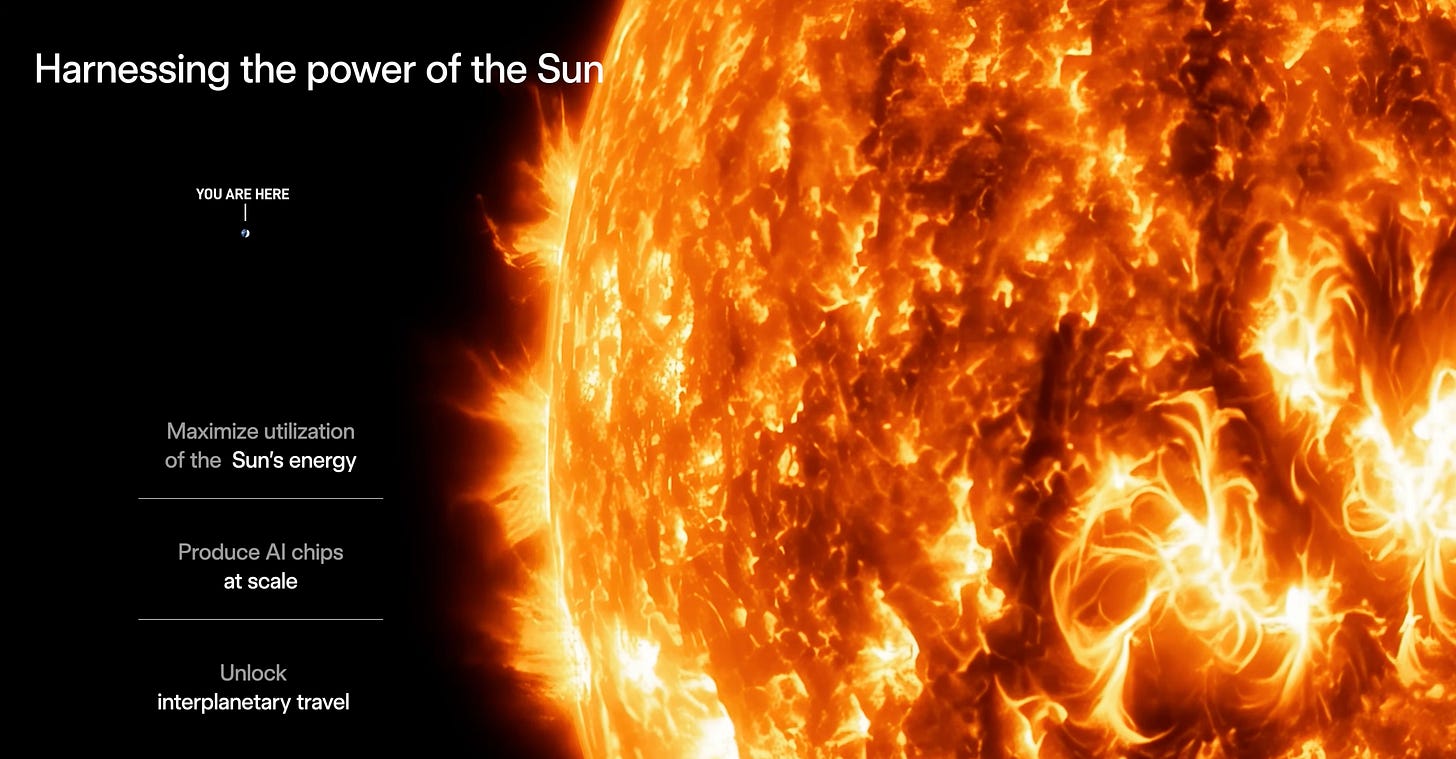

Terafab sits inside a much larger theory of technological development. Musk frames the project in civilizational terms, invoking the Kardashev scale and arguing that human progress will eventually be bounded by the rate at which civilization can capture and use energy. He notes that humanity is not yet a true Type I civilization and emphasizes how little of the sun’s available energy is actually being converted into useful power on Earth. The underlying implication is that future abundance depends on scaling both power and compute by orders of magnitude, not through marginal improvements to present systems but through a different industrial configuration. Terafab is presented as a first practical step in that direction: large by current standards, small by the standard of the long-term system Musk has in mind.

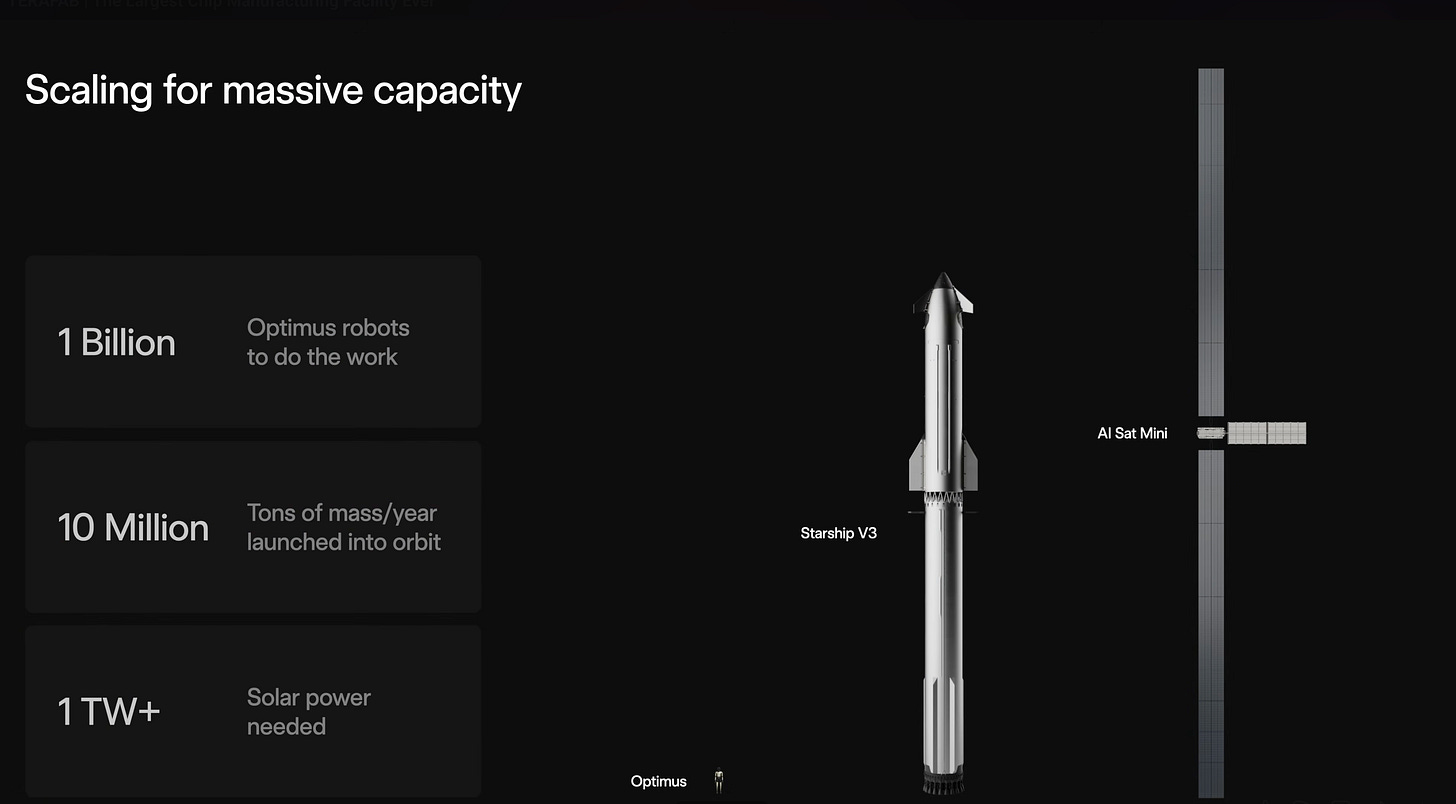

At the same time, Musk has begun to attach more explicit quantitative framing to this vision. The long-term target is approximately 1 terawatt of annual compute production, roughly double current U.S. compute capacity, and achieving that scale could require between $5 trillion and $13 trillion in capital expenditure. These numbers define the scale at which Musk believes future energy and computing systems must operate to support robotics, autonomy, and large-scale AI deployment.

Terafab is described at once as a fab, an AI hardware program, a robotics enabler, and a bridge to orbital infrastructure. In ordinary semiconductor discourse, those categories would be separated into different sectors, different timelines, and often different firms. Here they are collapsed into a single argument. SpaceX provides mass to orbit, Tesla provides robotics and large-scale industrial execution, xAI provides growing internal compute demand, and Terafab is meant to supply the silicon and packaging substrate that binds them together. Intel’s entry into the project reinforces this interpretation.

More concretely, the emerging structure suggests a division of roles: Tesla is expected to build and operate the initial research fab, while SpaceX may take responsibility for scaling the larger industrial footprint. The full ownership and operating structure remains unresolved, reflecting the project's early stage and the complexity of coordinating multiple entities within a single manufacturing system.

At the manufacturing level, the distinguishing feature of Terafab is not simply planned output but the attempt to compress the semiconductor iteration loop. Musk describes an Austin “advanced technology fab” intended to house the equipment necessary to make logic, memory, lithography masks, packaging, and testing within a single building. The value of a facility like this would not come only from ownership of capacity. It would come from reducing the time between design revision and measured silicon performance. The proposed loop is straightforward in principle: produce or modify a mask, fabricate the chip, test it, revise the design, and repeat without relying on the conventional long chain of external handoffs.

The relevance of that change increases as AI chips become more specialized and as packaging becomes more central to system performance. Intel’s own materials on advanced packaging underline how much of modern compute capability now depends on combining multiple dies within a single package. Performance, bandwidth, thermals, and density increasingly depend on packaging and integration choices rather than on logic scaling alone. That is one reason the repeated pairing of “logic, memory, and packaging” in the Terafab discussion is significant.

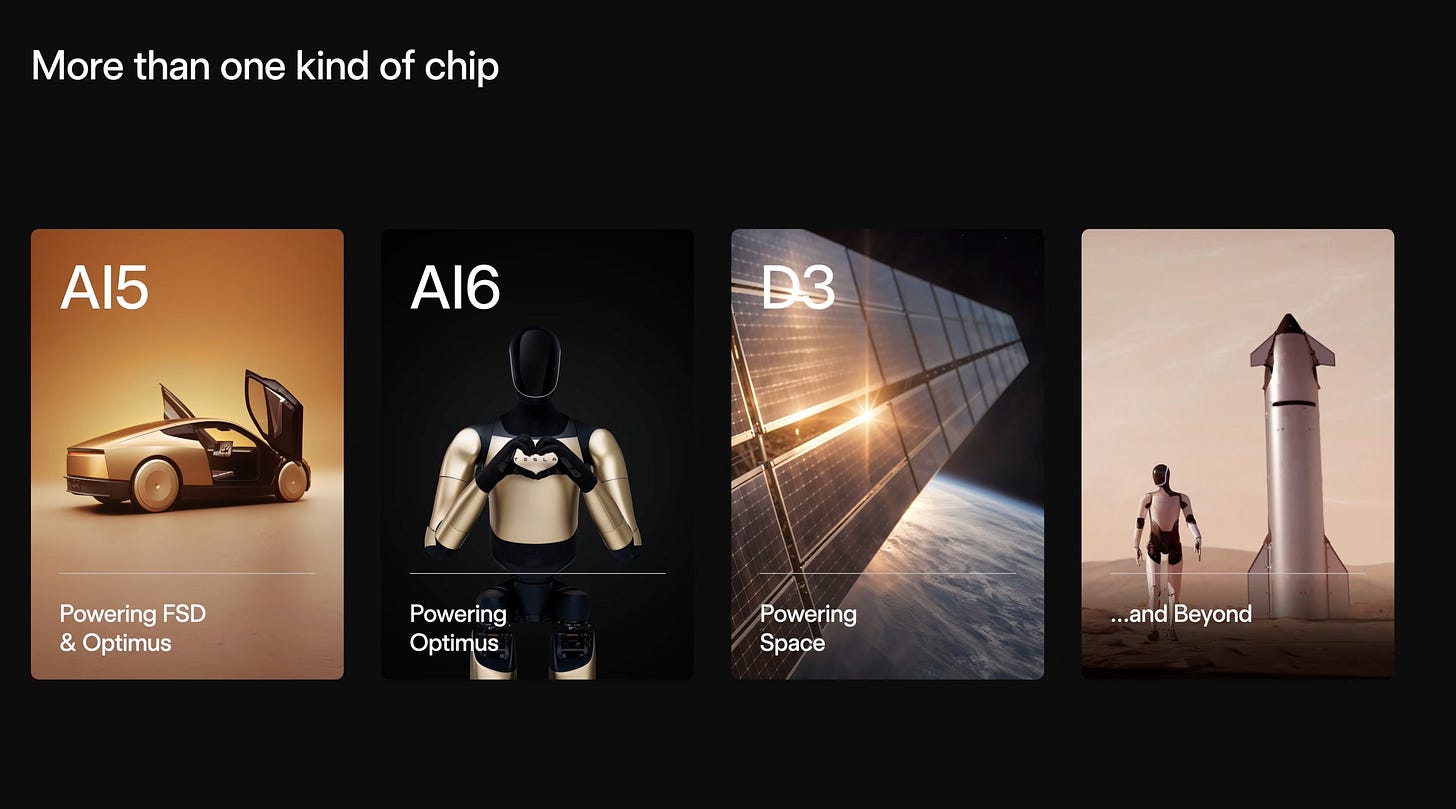

Recent disclosures also suggest that Terafab may extend further upstream than initially assumed. SpaceX is reportedly exploring the development of its own GPUs, suggesting the project may encompass not only fabrication and packaging but also chip architecture. If realized, this would further tighten the integration between hardware design and deployment environments.

The project’s semiconductor logic also rests on the assumption that future demand will be internally generated at a very large scale. Tesla already positions efficient inference hardware as central to its autonomy and robotics ambitions. It is already involved not only in model development but in inference chip architecture, silicon validation, and deployment into vehicles. xAI, by definition, is compute-hungry. SpaceX contributes a different form of demand, less through cloud-style workloads than through the physical capability to transport compute into new operating environments.

Terafab is expected to produce two broad classes of chips. One class is for edge inference, mainly for Tesla vehicles and especially Optimus humanoid robots. The other is a higher-power compute designed specifically for AI data centers, including those potentially deployed in space. These imply different optimization problems, thermal assumptions, packaging requirements, and notions of useful performance.

The emphasis on Optimus is especially important. Musk argues that humanoid robot production could eventually exceed vehicle production by a wide margin, sketching a future in which global robot output reaches between one and ten billion units per year, compared with about one hundred million vehicles. That number is speculative in the extreme, but it performs an important analytic function. It establishes the internal demand model under which Terafab is being imagined. In that model, the key semiconductor challenge is not a modest expansion of current automotive compute. It is the industrialization of inference at robot scale. Once that possibility becomes the denominator, a terawatt-per-year compute target becomes an attempt to size a manufacturing system against a radically different future workload distribution.

Musk has also been arguing that space may become an economically superior environment for AI infrastructure once launch costs and solar deployment costs fall far enough. Orbit offers continuous sunlight, avoids atmospheric attenuation and seasonal variability, reduces battery dependence, and allows lighter solar structures than terrestrial installations. AI in space could become cheaper than AI on Earth within only a few years after the relevant cost-to-orbit threshold is crossed. This should be treated cautiously, but it is a structurally important direction. It means Terafab is being designed not only for a different chip supply chain, but for a different geography of compute. Its long-term purpose is inseparable from the assumption that some meaningful share of future intelligence production migrates toward where power can be scaled more cleanly.

This line of thinking is increasingly echoed across adjacent efforts. Companies such as Aetherflux are exploring similar combinations of energy generation and compute in orbit, reinforcing the idea that future infrastructure may be co-designed across power and computation rather than optimized independently. In that sense, Terafab can be understood as one component of a broader shift toward integrated energy-compute systems.

That in turn explains why Starship is woven directly into the Terafab narrative. Reaching the desired compute scale would require around 10 million tons to orbit per year, at approximately 100 kilowatts per ton. Musk talks about early AI satellites around the one-hundred-kilowatt level, with future systems expanding toward the megawatt range. In the same way that some industrial visions are constrained by rail capacity or electric grid interconnection, this one is constrained by access to orbit. SpaceX, therefore, functions less as a separate business in the story and more as the transport layer for a compute architecture. The manufacturing thesis of Terafab cannot be separated from that launch thesis without losing much of its logic.

There is an important industrial precedent buried inside that structure. Musk has repeatedly tried to internalize strategic bottlenecks once they became large enough to determine the pace of the company's development. Tesla internalized charging infrastructure at scale through the supercharger network. SpaceX attacked launch economics through reusability. Tesla has spent years attempting to turn manufacturing itself into a product-level competence rather than a downstream operational necessity. Terafab extends the same instinct into the semiconductor stack. The motivation is the belief that growth in autonomy, robotics, and AI will be constrained by an upstream layer that the current market treats as external and as a source of rationing. In that sense, Terafab is consistent with the broader Musk pattern. It emerges at the point where a purchased input becomes too strategically important to leave fully outside the firm's boundary.

Even with Intel involved, the competitive logic of Terafab is narrower than broad commentary often assumes. The project is unlikely to succeed by displacing Nvidia, TSMC, Samsung, Micron, and the wider semiconductor ecosystem across all fronts. A more plausible interpretation is that it competes through specificity and internal alignment rather than breadth. It serves internal demand first. It optimizes for custom inference and deployment environments in which Tesla and SpaceX have unusually precise demand visibility. It attempts to accelerate the design-fabrication-test loop in ways conventional supply chains do not naturally optimize for. It integrates launch capability and downstream hardware programs into the same planning boundary.

computing

The most recent development that clarifies the broader system is the integration of Cursor into the SpaceX ecosystem. SpaceX has secured the right to acquire Cursor for approximately $60 billion or to enter a large-scale partnership focused on joint AI development. This introduces a new layer to the Terafab thesis. Cursor represents acceleration at the software and model-development layer, while Terafab targets acceleration at the hardware layer. The integration suggests an attempt to align both cycles—software iteration and silicon iteration—within a single coordinated system.

The significance is not that Cursor directly affects semiconductor manufacturing, but that it tightens the coupling between software demand and hardware supply. Faster model development increases pressure on specialized silicon, while faster silicon iteration enables more aggressive software experimentation. In this sense, the Cursor integration reinforces the central Terafab thesis: the limiting factor is no longer any single layer of the stack, but the speed at which all layers can co-evolve.

The broader significance of Terafab lies in what it says about the current stage of AI industrialization. For several years, AI was discussed largely at the model layer. Then attention shifted to GPUs and data centers. Terafab reflects the next move downward. It foregrounds packaging, iteration speed, energy sourcing, launch access, and manufacturing integration. It treats compute as infrastructure that must be designed, built, and deployed within a larger industrial system. Whether or not the specific Musk roadmap proves correct, that shift in emphasis is important in its own right. It suggests that the next phase of AI competition may depend less on model novelty alone and more on who can shape the physical substrate of intelligence production.

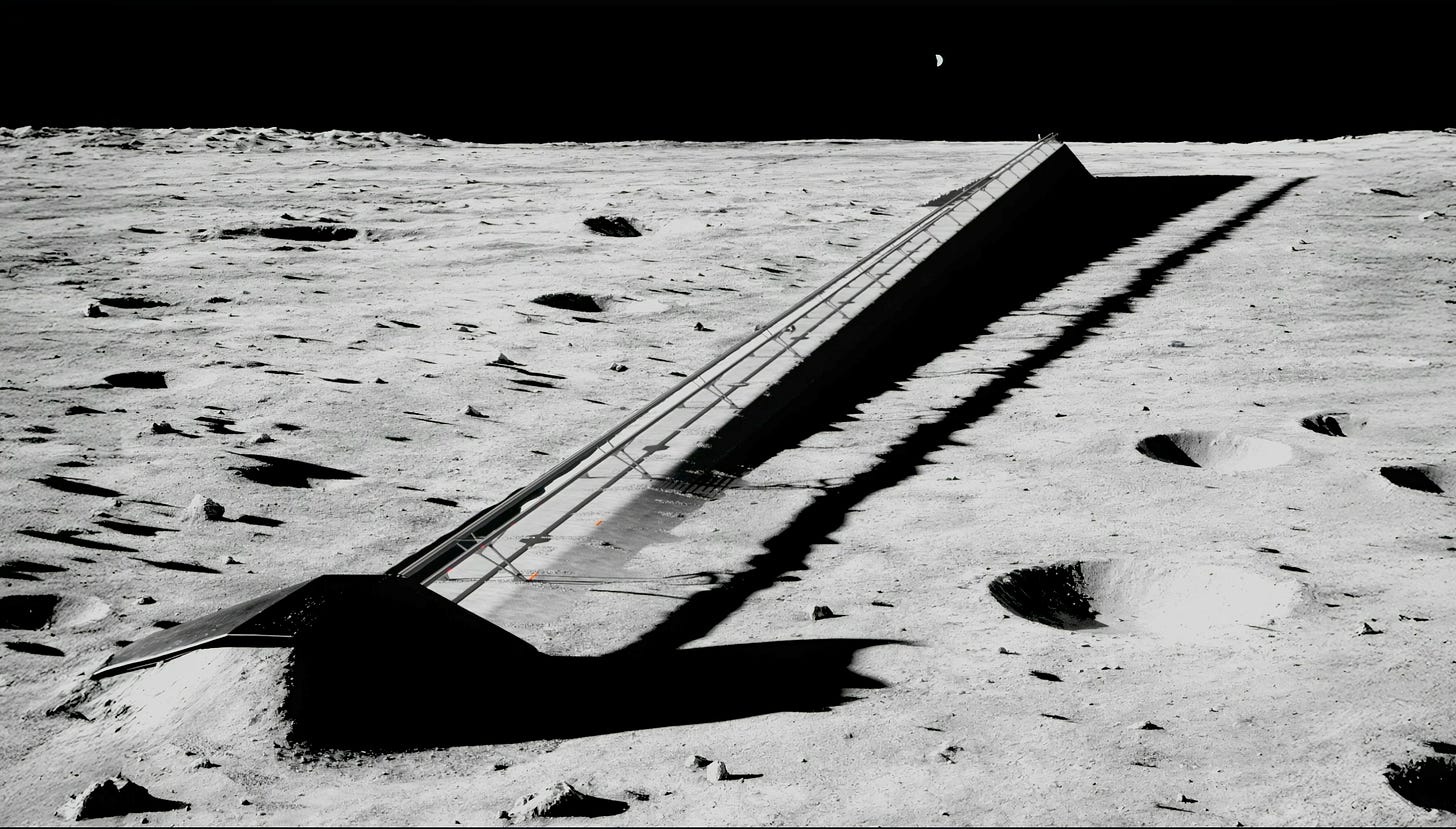

This perspective also helps explain why the project extends beyond the terawatt scale in Musk’s presentation. After Terafab comes a more speculative idea of “petafab,” tied to lunar infrastructure and mass drivers. Most of that material belongs more to long-range systems imagination than to current industrial planning. Still, it is not irrelevant. It shows that Musk treats Terafab as one rung on a ladder, with silicon production, orbital deployment, and eventually lunar logistics forming a continuum. The project is conceived more as an expandable compute-production infrastructure tied to successively less Earth-bound energy and transport systems.

About e1 Ventures

e1 Ventures backs founders at the frontiers of science and technology, where the hardest problems hide the greatest possibilities.

About Opulentia Ventures

Opulentia Ventures operates as a “VC Tribe,” consolidating resources from experienced investors to support pioneering companies focused on technological advancements, healthcare, and national security. Headquartered in the Washington, DC, metro area, the firm leverages deep government and defense-sector relationships to identify emerging opportunities at the intersection of innovation and national priorities.