Earth is hitting a wall on AI — not because we’ve run out of land, but because we’ve run out of grid, water, and political will. Global data centers already consume 415 terawatt-hours of electricity annually—roughly 1.5% of all power generated on the planet—and demand is projected to surge 165% by 2030. Hyperscalers are projected to spend $600-$700 billion in 2026 alone, with over $5 trillion in AI-specific data center investment expected over the next five years. Yet permitting battles, grid bottlenecks, water shortages, and community opposition are slamming the brakes on terrestrial expansion.

So what if the answer isn't building more data centers on Earth—but above it?

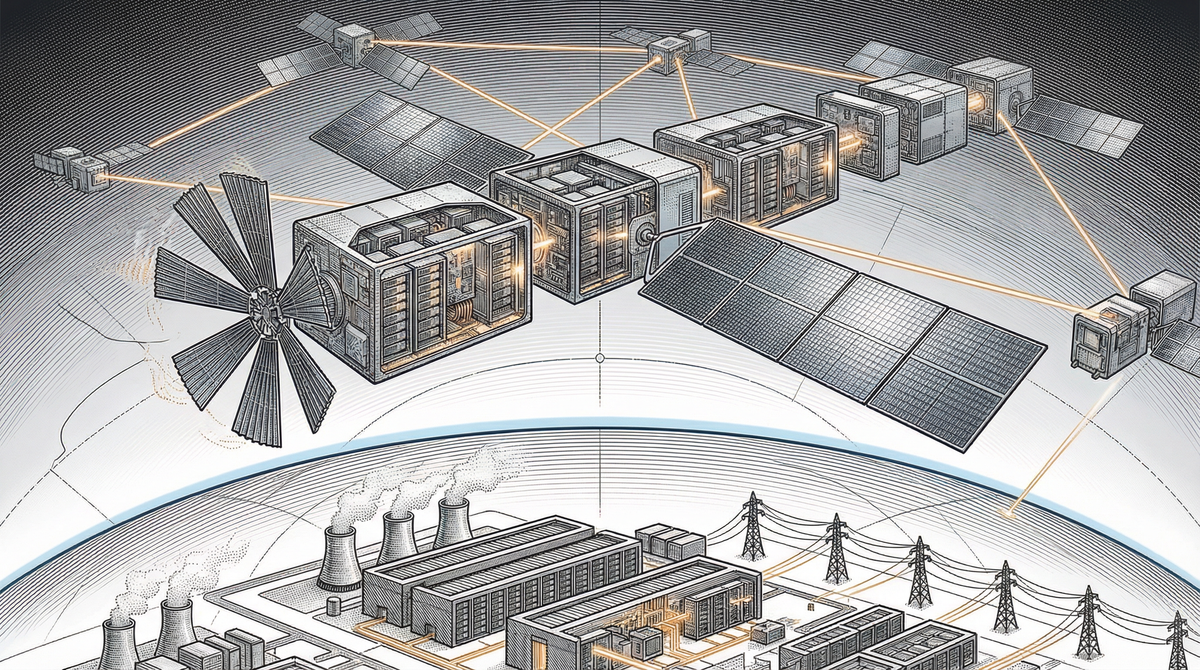

That’s no longer science fiction. In the past twelve months, startups have trained AI models on GPUs in orbit, tech giants have unveiled satellite constellation blueprints, and the largest launch provider on Earth has filed to build a million-node data center in space. A new compute frontier is opening — fast.

Not everyone is convinced. Very recently, OpenAI CEO Sam Altman called orbital data centers “ridiculous,” Gartner analysts labeled them “peak insanity,” and investor Jim Chanos dismissed them as “AI snake oil.” The critiques aren’t baseless: thermal management at gigawatt scale is unproven, radiation damage to commercial chips remains unsolved, and launching a million satellites into already-crowded orbits invites regulatory gridlock and debris catastrophe. The skeptics have a point—this could easily become the space industry’s next overhyped disappointment, joining solar power satellites and asteroid mining in the graveyard of ambitious-but-premature ideas.

But dismissing orbital compute outright ignores what’s already happened. Real GPUs are training real models in orbit today. The physics pencils out. And the companies pouring billions into this aren’t known for funding PowerPoint fantasies—they’re solving the hardest problems in aerospace, semiconductor design, and thermal engineering. The question isn’t whether the challenges are real. It’s whether the economics shift fast enough to make solving them worth it.

Why Space? The Physics Are Actually Compelling

The core pitch for orbital data centers rests on three advantages that Earth simply cannot match:

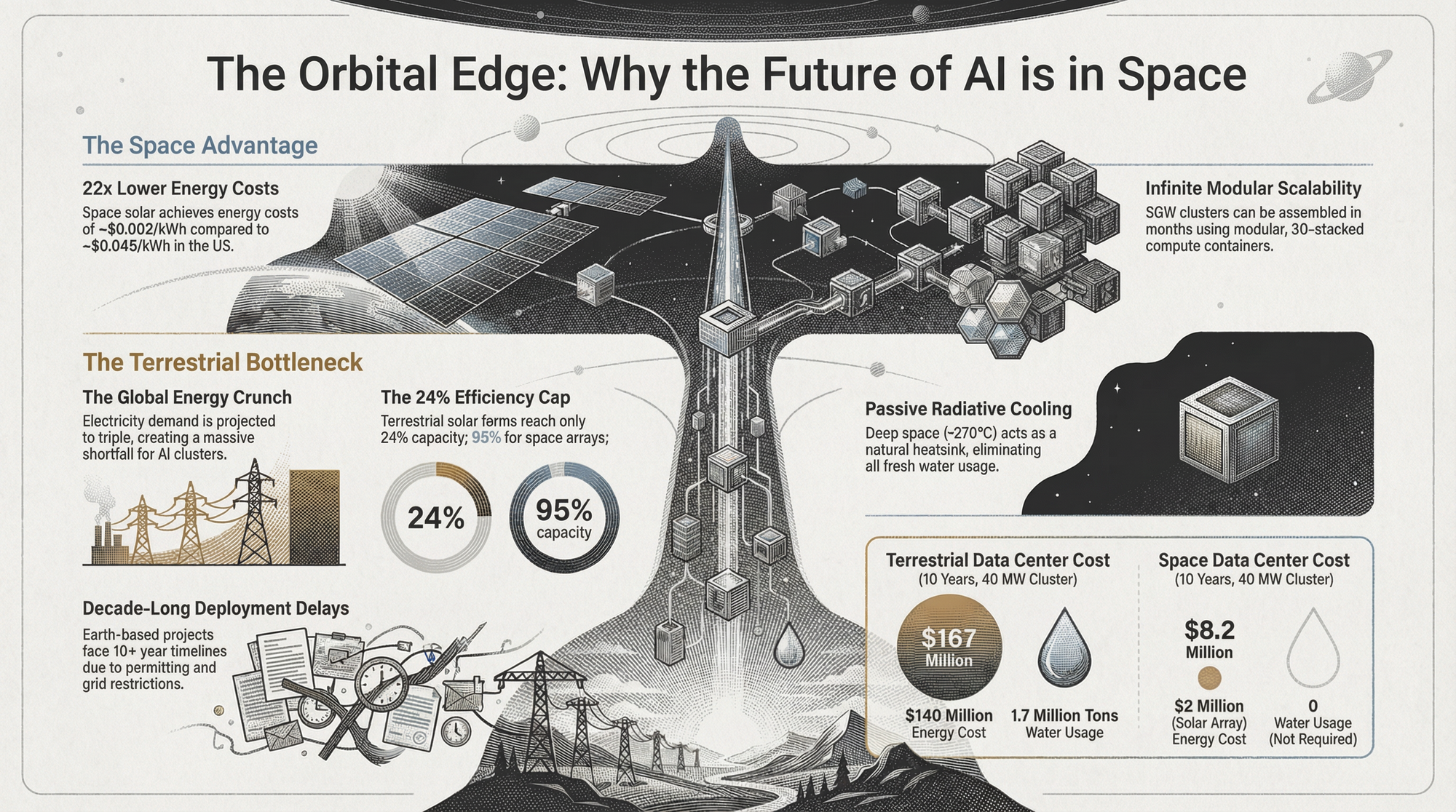

- Free, uninterrupted energy. In a dawn–dusk sun-synchronous orbit at 500–650 km, solar arrays can see the Sun virtually 24/7, with no clouds and minimal eclipses, delivering a much higher and more predictable capacity factor than ground-based solar. While today’s studies still put the levelized cost of space-based solar power above or at best comparable to terrestrial renewables, falling launch costs and higher orbital utilization point to a future where orbital energy could rival, and eventually undercut, the effective power prices paid by hyperscale data centers on Earth.

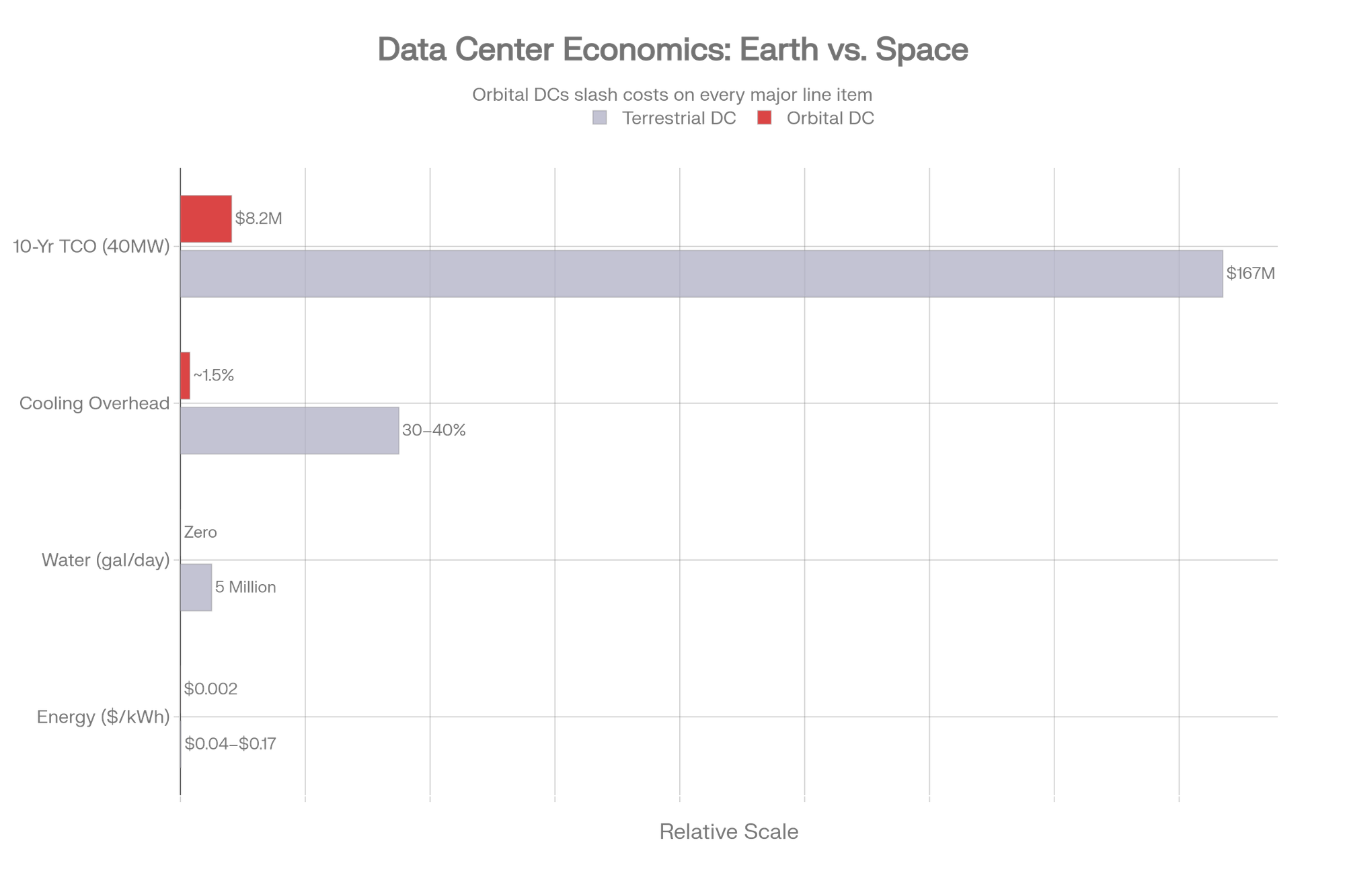

- Free cooling. Data centers are essentially giant heaters—every watt of compute becomes a watt of heat. On Earth, removing that heat requires massive chillers, evaporative towers, and up to 5 million gallons of water per day for large facilities. In space, heat radiates passively into the 2.7-Kelvin vacuum. The ISS's cooling loops handle 100 kW using just 1.5 kW of pump power—a coefficient of performance that terrestrial systems can only dream of.

- Infinite scalability. No land to buy. No permits to fight. No NIMBYs. No grid interconnection queues that stretch for years. Each Starship launch can carry ~100 tons to orbit at roughly $30/kg, deploying a 40 MW data center module in a single shot.

Starcloud's white paper projects that a 40 MW orbital cluster would cost $8.2 million over 10 years, versus $167 million for an equivalent terrestrial facility. Even skeptics concede the energy math is seductive.

The Hard Engineering Challenges

If the physics are compelling, the engineering is humbling. Here's what keeps this from being a PowerPoint fantasy:

Thermodynamics remains king. A 1 GW orbital data center needs on the order of 2.5 square kilometers of radiators at realistic emissivity—that is not a side-quest, it is a small city’s worth of deployable thermal hardware that must survive micrometeorites and operate flawlessly for years.

Radiation kills chips. Commercial GPUs were never designed for the space radiation environment; single energetic particles can flip bits, poison a training run, or permanently damage transistors. NASA’s High-Performance Spaceflight Computing program targets processors 100× faster than today’s space-grade parts, but radiation-tolerant hardware at leading-edge performance simply doesn’t exist yet.

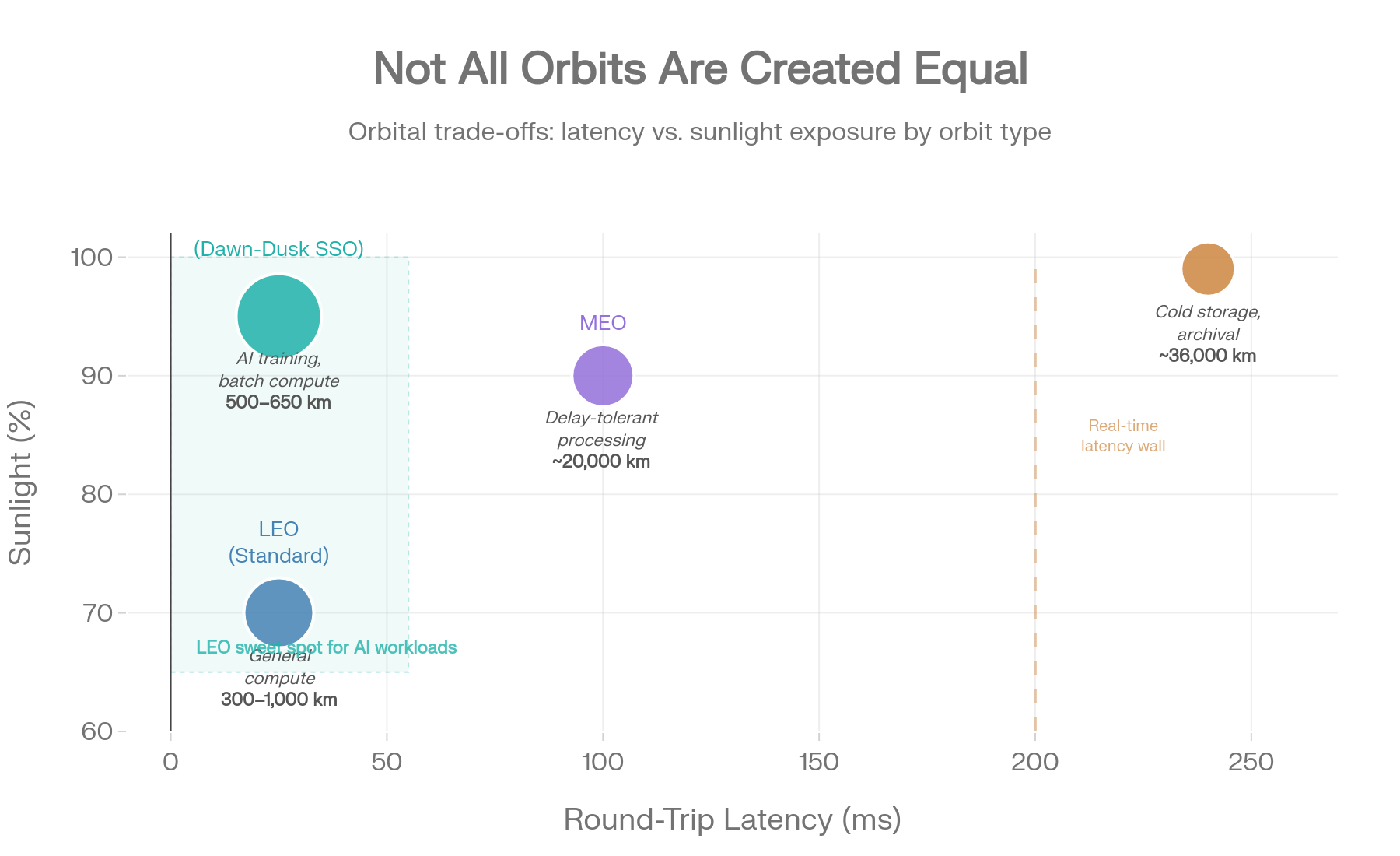

Latency is physics-capped but usable. From low Earth orbit, one-way signal time is roughly 1–4 ms, with round-trip latency comfortably under 50 ms—similar to long-haul terrestrial links and perfectly acceptable for training and batch inference. What it does not support is ultra-low-latency, human-in-the-loop workloads; at geostationary orbits, ~240 ms round-trip is effectively a nonstarter for real-time applications.

Bandwidth is the choke point. Google and others have shown multi-terabit inter-satellite laser links in the lab, but a serious orbital data center constellation needs one to two orders of magnitude more aggregate throughput to rival the multi-petabit fiber backbones feeding terrestrial hyperscalers. Until then, space will be an accelerator tier, not a full replacement.

You can’t send a technician. When a rack dies in a Data Center, someone swaps boards within hours; in orbit, you need autonomous fault detection, graceful degradation, robotic servicing—or you treat nodes as disposable and launch replacements. Refresh cycles shift from an operating expense question to a heavy capex and logistics problem, with failure rates and on-orbit repairability becoming core design constraints rather than afterthoughts.

Where Exactly Do You Put a Data Center in Space?

Not all orbits are created equal:

The emerging consensus favors LEO dawn-dusk sun-synchronous orbit at 500–650 km—the same zone Google is targeting for Suncatcher. It minimizes eclipse periods for near-continuous solar power, keeps latency competitive, and ensures natural deorbit within 25 years (mitigating the debris problem).

Google's Suncatcher design envisions 81+ satellites spaced 100–200 meters apart within a 1 km radius formation. Starcloud is targeting 40 MW per module with plans for multi-gigawatt constellations by decade's end.

What is Something Goes Wrong? Data Security in Orbit

This is the question every CISO will ask. The answers are surprisingly robust:

Quantum-grade encryption is already space-ready. Quantum Random Number Generators leverage quantum physics to produce truly unpredictable encryption keys, and they’re already flying on commercial satellites. China’s Micius satellite has demonstrated global-scale Quantum Key Distribution using entangled photons — any attempt to intercept the signal physically disturbs the quantum state and is immediately detected. A separate field trial in Singapore achieved a secret key rate of 2.392 kbps with a quantum bit error rate below 2%, validating QKD over commercial data center infrastructure.

Post-quantum cryptography is maturing fast. Forward Edge AI’s Isidore Quantum platform, developed from NSA innovation and aligned with CNSA 2.0 standards, provides AI-driven, zero-trust, post-quantum encryption designed for satellite-to-satellite and satellite-to-ground links. It has been tested by the U.S. Space Force, NASA, and defense partners, and is FIPS 140-3 certified.

The “tamper-proof vault” concept. An orbital data center is inherently harder to physically access than virtually any terrestrial facility. Combined with automated data-wiping protocols triggered by anomalous approach vectors, orbital compute could become one of the most physically secure data environments ever built.

Redundancy is the real answer. Just as terrestrial cloud providers replicate data across availability zones, orbital systems would maintain ground-based backup copies. A catastrophic satellite failure means temporarily losing compute capacity — not permanently losing data.

What still needs to be invented

Several critical technologies must mature before orbital data centers reach hyperscale:

Radiation-tolerant commercial chips: Bridging the gap between NASA-grade reliability and NVIDIA-grade performance. Google’s radiation testing of its Trillium TPU found surprising resilience, but commercial GPUs weren’t designed for the space environment; single energetic particles can flip bits, corrupt training runs, or fry transistors entirely. NASA’s HPSC project and RadPC program are pushing toward processors 100× more capable than current space-rated chips, but radiation-tolerant hardware at commercial performance levels doesn’t exist yet.

Multi-Tbps operational laser links: Google’s Project Suncatcher demonstrated 1.6 Tbps bidirectional on the bench using a single transceiver pair, but operational constellations will need at least an order of magnitude more aggregate throughput to rival the multi-petabit fiber backbones feeding terrestrial hyperscalers.

On-orbit robotic assembly: Thales Alenia Space’s EROSS IOD demonstrator, now targeting 2027, will validate satellite rendezvous, capture, docking, and payload exchange in orbit — enabling the construction of structures too large for a single launch.

Advanced thermal radiators: Variable-emittance surfaces, droplet radiators, and loop heat pipes are needed to handle megawatt-scale waste heat. Google’s own Suncatcher team flags thermal management as one of the most significant engineering challenges remaining for orbital compute.

Ultra-lightweight solar arrays: Companies like Solestial are pushing toward 200–800 W/kg at the array level, with far-term concepts targeting over 1,000 W/kg — a step change from today’s roughly 100 W/kg state of the art that would dramatically increase power delivered per launch.

Low-emission, high-cadence launchers: The EU-funded ASCEND feasibility study found that space data centers need rockets emitting ten times less carbon over their full lifecycle than current vehicles to deliver a net environmental benefit over terrestrial alternatives.

The Debris Elephant in the Room

Kessler syndrome—a cascading chain reaction of orbital collisions—is the existential risk nobody in the space DC pitch deck wants to dwell on. Over 11,800 active satellites already crowd LEO, with SpaceX's Starlink accounting for more than 7,000. An economic model estimates a threshold of roughly 72,000 satellites before cascading debris becomes unavoidable.

Now add a million-satellite data center constellation to that equation. Active debris removal, collision avoidance AI, and responsible deorbiting protocols aren't just nice-to-haves—they're prerequisites for this industry to exist.

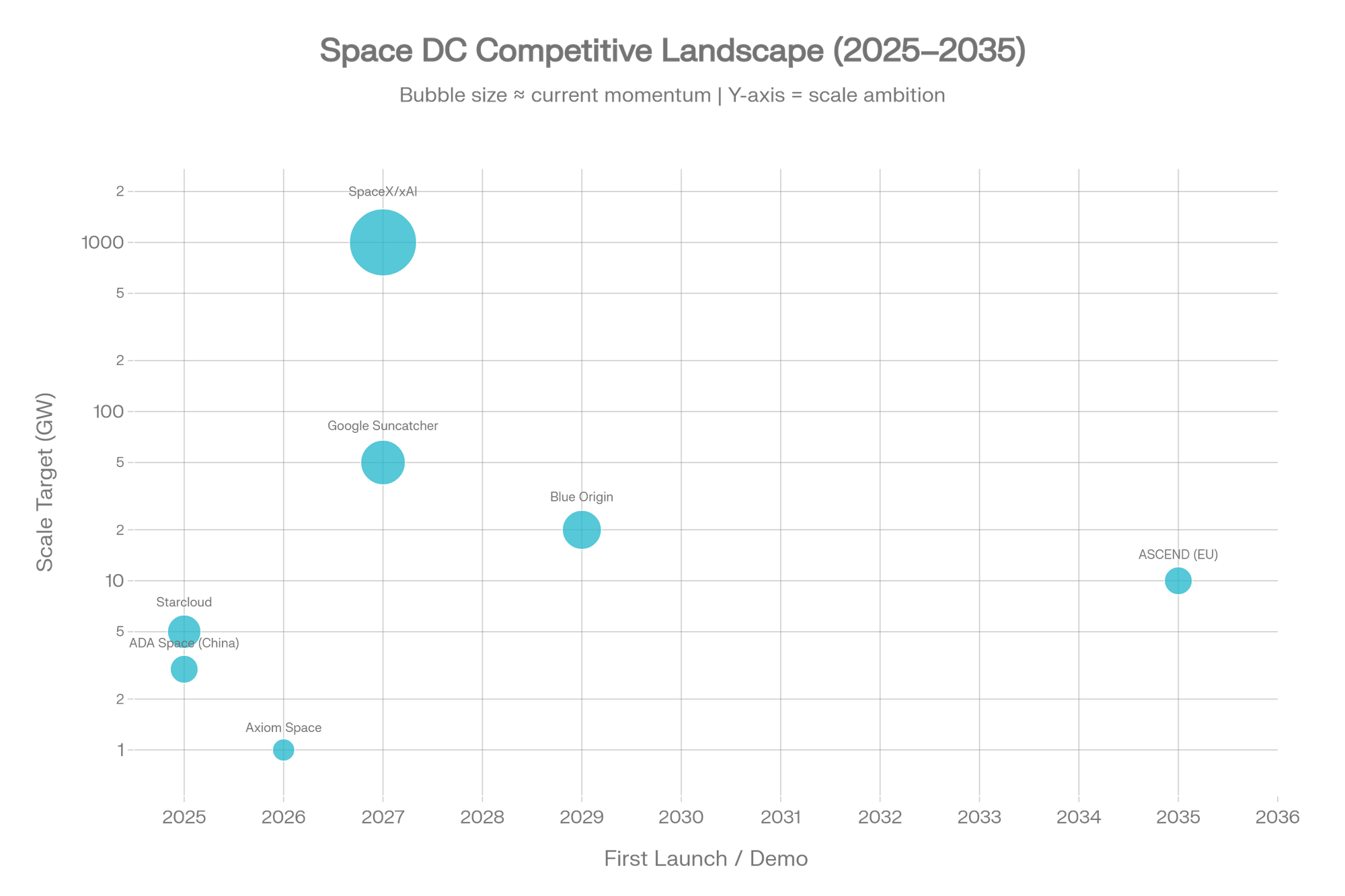

So who is doing it?

As of Feb 2026, Starcloud (YC‑backed) has flown the first H100 in orbit (Nov 2025) and is targeting multi‑GW scale by ~2030, while Google’s Suncatcher has demonstrated a 1.6 Tbps laser link and aims for a TPU constellation with launch by 2027. SpaceX/xAI has filed with the FCC for a 1M‑satellite network (Jan 2026) targeting 100 GW/year of capacity, and Blue Origin has had a dedicated team working for over a year toward a 5,000‑satellite system. Axiom Space launched the first two orbital data centers in Jan 2026 with a modular expansion roadmap, ADA Space in China has operated a 12‑sat cluster since spring 2025 toward a 2,800‑sat constellation, and the EU/Thales ASCEND program has completed its feasibility study with an EROSS robotics demo planned for 2027 and a 1 GW goal before 2050.

The Opulentia Investment Lens

The terrestrial data center market is projected to surpass $300 billion in 2026 and approach $700 billion by 2034 at roughly 11% CAGR, with some analysts sizing it even higher. More importantly, AI-specific data center spending alone is projected to exceed $5 trillion over the next five years. This creates a massive “pull” for any technology that can deliver cheaper compute at scale.

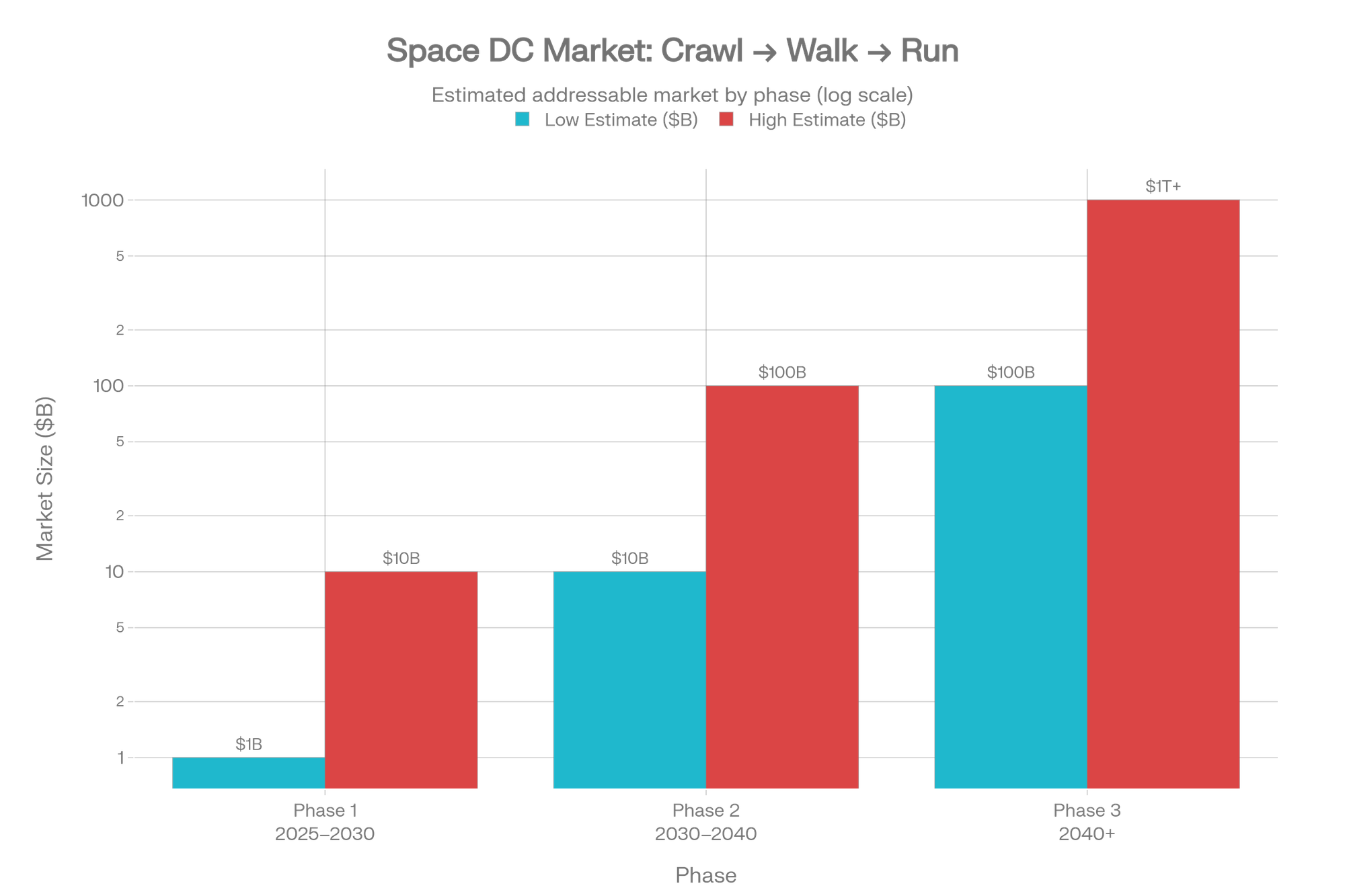

The investment opportunity unfolds in three phases:

Phase 1 — Crawl (Now): Demonstrators, satellite edge compute, secure orbital vaults. Estimated market: $1–10 billion. The picks-and-shovels plays include radiation-hardened chip designers, deployable solar array manufacturers, and thermal management specialists.

Phase 2 — Walk: Multi-hundred-kilowatt clusters serving batch AI training and sovereign compute. Market expands to $10–100 billion. Launch providers with high cadence and low cost per kilogram become critical infrastructure.

Phase 3 — Run: Megawatt-to-gigawatt-scale facilities competitive with terrestrial hyperscale on pure economic terms. Potentially a $100 billion to $1 trillion+ market. This is where the thesis either validates spectacularly or fades into aerospace history.

The bull case relies on SpaceX driving launch costs below $200/kg by the mid-2030s, making orbital compute cheaper than terrestrial alternatives for latency-tolerant workloads. The bear case points to unproven thermal management at scale, chip degradation from radiation, and the regulatory morass of deploying a million new objects into already crowded orbits.

For venture investors, the near-term opportunity sits in the enabling technology stack—not the data centers themselves. Quantum-secure communications, radiation-tolerant processors, robotic on-orbit assembly, advanced radiators, and high-efficiency deployable solar are all fundable verticals with applications well beyond orbital compute.

The companies that figure out how to move computing off-planet won't just be building data centers. They'll be building the infrastructure layer for an economy that outgrows Earth. The question for investors isn't whether this happens—it's which layer of the stack captures the most value, and when.

This article is inspired by the white paper “Why we should train AI in space," by Lumen Orbit (now Starcloud), v1.03, September 2024. My use of these ideas is limited to fair-use discussion, critique, and education; I do not claim, and expressly disclaim, any ownership, authorship, or proprietary interest in the White Paper.